At iData Global, we’ve spent years developing technology solutions that aim not only to improve efficiency in healthcare systems, but also to honor their deepest purpose: preserving life. As engineers, we work with data. But as a human team, we understand that behind every algorithm lies a story, a patient, and a critical decision. That’s why, when we talk about artificial intelligence in clinical environments, we don’t approach it with technological but with responsibility.

Now more than ever, insurers, public entities, academic hospitals, and clinical leaders are facing an urgent question:

How can we ensure that algorithms supporting medical decisions operate in a fair, ethical, and controlled manner?

AI Governance in Healthcare: More Than Just Compliance

AI governance is not merely a technical or legal issue, it is, above all, a matter of trust. It involves creating structures, processes, and principles that ensure healthcare AI models are:

- Explainable and auditable

- Free from harmful bias

- Used under medical supervision

- Accountable for the outcomes they generate

And while regulatory frameworks across Latin America are still evolving, more and more organizations are requiring their technology partners to operate under robust and verifiable self-governance standards. At iData Global, we see this not just as a requirement, but as an ethical commitment to our strategic partners.

Real Risks We Cannot Ignore

We recognize that AI can amplify health inequities if models are trained on non-representative data or deployed without clinical validation. A poorly calibrated model could delay critical treatment or overlook a serious condition—leading to irreversible consequences.

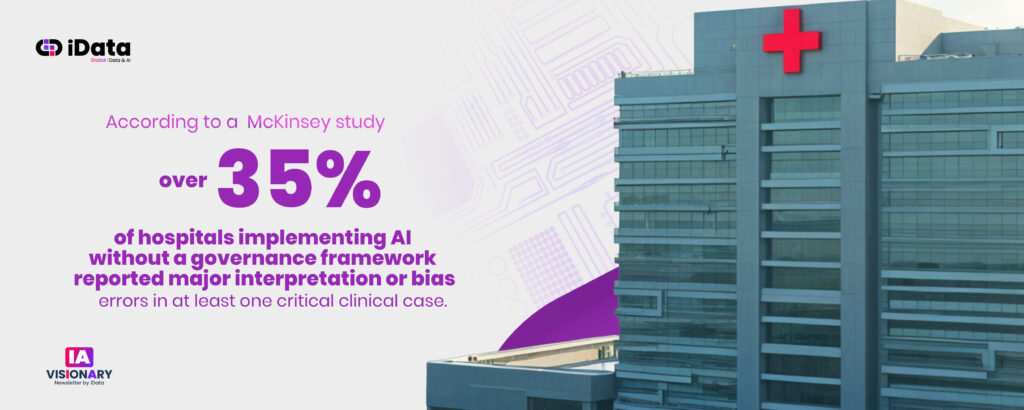

According to a 2024 McKinsey study, over 35% of hospitals implementing AI without a governance framework reported major interpretation or bias errors in at least one critical clinical case.

Gartner also projects that by 2026, 50% of AI-supported clinical decisions in high-level institutions will be subject to mandatory ethical review. This highlights a clear trend toward algorithmic accountability—one that no healthcare leader can afford to ignore.

Putting Governance into Practice

In our experience, responsible clinical AI requires intentional design, including:

- Algorithm traceability mechanisms (Who trained it? With what data? Under what assumptions?)

- Medical validation checkpoints at every stage

- Active involvement of clinical and ethics committees during development

- Routine audits for bias, accuracy, and real-world performance

This approach not only reduces legal and reputational risks—it also increases adoption by healthcare professionals who need to trust the tools they use in daily clinical practice.

Ethical Governance in Action: Measurable Outcomes, Human Impact

In the case studies of Colombia’s Cuenta de Alto Costo and Chile’s Asociación Chilena de Seguridad (ACHS), implementing ethical governance principles from the outset led to AI solutions that prioritized both clinical safety and institutional trust.

From reducing critical wait times in breast cancer care to responsibly deploying computer vision models in radiology, both projects demonstrated that it’s possible to scale AI without compromising clinical judgment or systemic equity.

Want to dive deeper into how these outcomes were achieved?

Read the previous article here

Institutional Leadership: Beyond the Technical Scope

For clinical directors, innovation leads at insurance companies, regulatory leaders, and academic hospital executives, the challenge is not simply to use AI—but to adopt it with purpose, oversight, and ethical vision.

This requires:

- Establishing clear internal governance policies

- Demanding transparency from technology providers

- Training clinical teams to critically interpret algorithmic outputs

- And most importantly: actively participating in the design of the technologies being adopted

At iData Global, we don’t deliver black boxes. We co-build platforms with our partners—offering full traceability, explainable logic, and shared oversight. In this way, each algorithm becomes a tool in service of human judgment, not a replacement for it.

Collaborative Governance: A Shared Responsibility

We firmly believe that healthcare AI governance must not be top-down or unilateral. It must emerge from a dialogue between clinicians, engineers, legal experts, patients, and decision-makers. Only then can we build governance frameworks that are solid, trustworthy, and sustainable.

That’s why we actively promote open spaces for technical and ethical discussion in every project we undertake. And we do so with humility—knowing that while technology evolves quickly, trust takes time to build.

What Must Never Be Lost: Human Judgment

In this journey of data, algorithms, and decision-making, one truth remains: human judgment is still the heart of healthcare.

AI can analyze millions of records, but it cannot hear a patient’s anxiety in their voice, or perceive the social context behind a medical decision. Only healthcare professionals can do that. And our role as engineers is to support them with tools that enhance—not override—that unique ability to understand, empathize, and decide.

Healthcare doesn’t need machines that replace people. It needs intelligence that empowers them. And that’s what we strive for every day at iData Global.

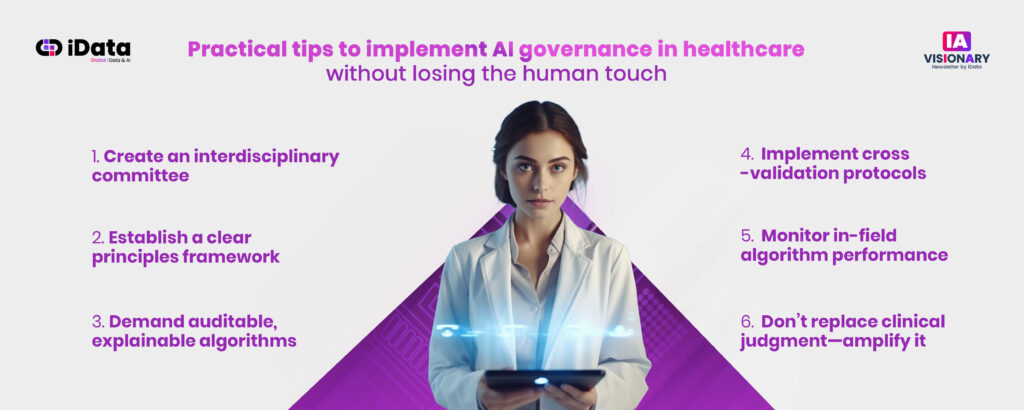

Practical Tips to Implement AI Governance in Healthcare—Without Losing the Human Touch

We know that advancing toward responsible AI isn’t just a tech decision, it’s a cultural transformation within each institution. Based on our experience in real-world projects, here are a few practical, ethical steps for effective implementation:

- Create an interdisciplinary committee

Include clinicians, engineers, legal experts, and patient or ethics representatives from the start. Governance must be built collaboratively. - Establish a clear principles framework

Privacy, equity, traceability, explainability, and clinical validation should be defined from the model design phase. - Demand auditable, explainable algorithms

Avoid “black boxes.” Ensure every model provides clear insights into why it makes decisions—and based on what variables. - Implement cross-validation protocols

Test models on real-world data with clinical specialists evaluating outputs before production deployment. - Monitor in-field algorithm performance

Governance doesn’t end at go-live. Set continuous evaluation routines, bias adjustments, and responsible model updates. - Don’t replace clinical judgment—amplify it

Set clear boundaries where human decisions always take precedence. AI is a companion, not a sole decision-maker.

Adopting these practices not only mitigates legal and operational risks, it strengthens trust across the clinical ecosystem and protects patient dignity.

Join Our Next Free Webinar

“From Data to Diagnosis: When Data Speaks First”

Want to understand how AI can anticipate risks, personalize treatments, and optimize clinical decisions—while upholding ethics, governance, and human judgment?

This webinar is designed for clinical leaders, insurers, regulatory bodies, and academic hospitals seeking to implement AI responsibly.

Because in healthcare, data may speak first—but decisions must remain human